-

Allowing TEAMS Live Event Guests to join anonymous by default

When I started producing a large amount of public facing Teams Live Events, I consistently had feedback that joining the event was too confusing. I agree, the default Microsoft Teams join experience for people that don’t already use Teams can be a little convoluted, especially if you’re not particularly tech savvy in the first instance.You…

-

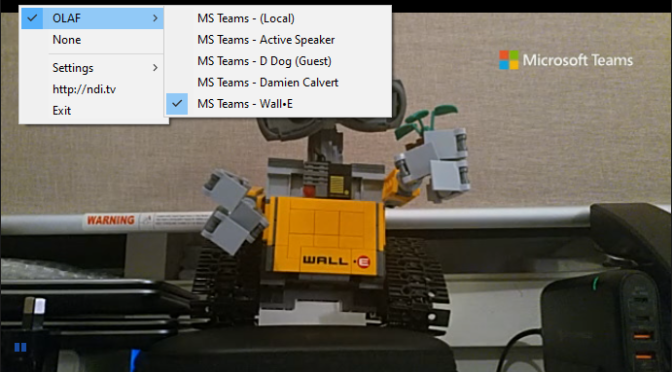

Recording Teams or Skype meetings with NDI

Ever had the need to record meetings with more control? Now you can as Microsoft Teams now includes support for a network video protocol called NDI. This makes it possible to record meeting presenters as individual videos.

-

OneDrive B2B sync

Microsoft released new functionality in the OneDrive sync client to now let users sync libraries or folders in SharePoint or OneDrive that have been shared from other organizations. They have a DOC article up about this B2B Sync capability and list it as still in preview as of the date of this post. To get…

-

Microsoft Flow – Tweet today’s events from an RSS feed

I started a little community project a few years ago called Melbourne Theatre Calendar with the aim of listing all of the theatre shows in Melbourne in a single place. It came out of frustration that when I wanted to go see a show I couldn’t easily see what was playing ‘today’ or ‘tomorrow’. Now…

-

Onsite wifi shooting with multiple Canon EOS 6D

One of my private clients is a photographer and he’s done a pretty good job at trying to keep pace with technology. He made the jump to digital pretty early and worked through the issues with colour and digital print quality and has installed his own digital photo lab. As camera megapixels’ increase, storage and…

-

Modifying USMT and KACE to capture Firefox settings and other specific programs

It’s that time in the hardware refresh cycle again where you have to replace laptops on mass, well at least it is for me. Our main challenge was migrating users Firefox bookmarks and also the desire to capture Outlook signatures and auto-complete information without capturing all Office applications information (we wanted to start as fresh…

-

Dell EqualLogic Virtual Storage Manager (VSM) hangs on VASA registration

(I may as well do something useful with this blog like add content Google can index to help people solve the same problems I’ve encountered in my day to day work.) So we run Dell EqualLogic arrays at work and I’ve had a problem getting the storage provider to register with the VMware VASA service.…

-

Phone phishing / fraud still going strong

Well it seems that phone phishing is sadly alive and rampant in Australia. Yet another client reported they had been cold called by a company, name given as Global Computer Solutions, claiming their computer had errors. They mentioned that Microsoft had passed on information to them that this persons computer had errors on it along…

-

Roadtrip stats

And so the roadtrip 2011 has ended. Here are some stats for you. 10,603 Km travelled 1,040 Litres of Fuel $1,669 spent on fuel 9.8 litres per 100km, average Most expensive fuel, $2.05 per litre (Balladonia WA ?) Average fuel price, $1.60 per litre Number of times tyres inflated and deflated, approximately a dozen. 497.7…

-

Day 21 – follow the smell of the coffee

View Larger Map The final day in the roadtrip first took us east, back across the boarder to Mildura. Today was another 700 odd km day but we did make a few stops. We stopped in Mildura down at Lock 11 where we found it currently not active due to the river level being so…